Misconceptions about the CAP Theorem

If you've ever listened to a NoSQL talk, you've probably come across the CAP theorem. The argument usually goes like this:

- Traditional databases guarantee consistency.

- The CAP theorem tells you that you cannot have consistency, availability, and fault-tolerance at the same time.

- But we want to build scalable databases, so we forget about consistency.

- Oh and by the way, who needs consistency anyway?

To be honest, to me this always looked like some poor excuse to not really discuss the design decisions of some NoSQL database. It's probably just me, but I much prefer at least an attempt at an unbiased analysis of the pros and cons so that I can make an informed decision whether it fits my needs or not. But pulling this theorem out of the hat is like saying "we don't even need to discuss this, because this theorem says impossible, ok!"

While searching for discussions of the CAP theorem, I found this excellent (but lengthy) article by Eric Brewer, one of the original authors of the CAP theorem: CAP Twelve Years Later: How the "Rules" Have Changed.

Here is my summary:

First of all, the interpretation that the CAP theorem says "you can only have 2 out of 3" is misleading. It's not like the original proof discussed all possible choices and showed that you can have only 2 out of 3.

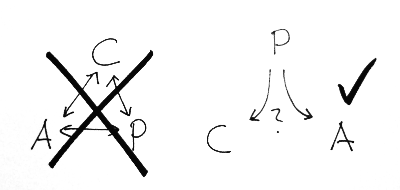

Instead, the original proof discusses the following situation: Say you have a distributed system which is in a consistent state (whatever that means), and now there is a __P__artition of the system, either an actual network failure, or some other way in which machines cannot talk to one another anymore.

Now consider what options you have when there is write request. You could wait for the partition to end in order to make sure that your system stays in a consistent state (thereby sacrificing __A__vailability), or you could do the update partially (thereby sacrificing __C__onsistency). So you can have only C or A in case of P but not both.

Note that there is really no way in which you could "choose P", it was always about how to handle partitions (which are often not really partitions, but timeouts), and that includes how to detect partitions, how to behave when you are in a "partition state", and how to bring the system back to a consistent state after a partition.

The article stresses that these are no binary decisions, but that there is rather a whole spectrum of possibly actions and strategies to choose from. It's not about saying "I can't have consistency and availability, so I'll just forget about consistency", it's about saying "in case of a failure, availability is more important to me, therefore I will accept temporary inconsistencies, and implement strategies to clean up afterwards".

When you look at it that way, you get a much clearer picture of how a database like Cassandra fits into this, and how their read repair, hinted handoff features work to regain consistency, although in a very lax (and eventual) way.

But it also becomes clear that it's just not true that you cannot have distributed databases which are highly available and come with consistency guarantees at all. The article goes on to discuss recent research results which try to achieve exactly that, strategies to minimize the impact of a partition on availability and consistency, how to re-establish consistency after a partition (also in the broader database sense of having consistent cross-references between tables and satisfying other invariants)

So the next time someone tells you he doesn't care about consistency because of the CAP theorem, ask him how he chooses P, and how he deals with the detection, handling, and cleanup of partitions.

React to this post

Comments (1)

Mikio, good reads on CAP, NoSQL and Spanner. Check out FoundationDB. NoSQL with full ACID transactions. But without the atomic clocks...